Just a thought, which I decided to scribble down before I forgot it.

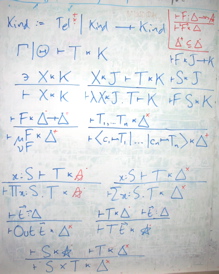

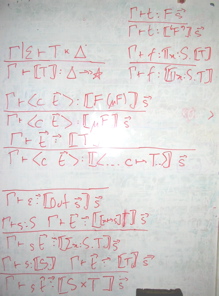

Key policy: Epigram is a declare-before-use language, temporally as well as spatially. When you say x, you get the most local existing x at that time, or you get a new x generated as locally as possible. In a declaration, spare names go into the generalisation prefix; in a rhs, spare names go into the where clause, one way or another. Naming is a surrogate for pointing, so once the bond of reference is created, it must not be broken by ill-propagated renamings, capturings, or whatever. It’s this policy which enables scope resolution at any point in time: there should never be any issue about the referent of an identifier.

Now, we go backwards as well as forwards, this time around, so what happens when you delete information. Well, there are at least two sorts of undo: devaluation (removing (parts of) the value of a symbol) and degeneration (removing the symbol altogether). Now, you propagate a devaluation by rechecking and devaluing everything which depends on a devalued symbol. Degeneration is much scarier. But I remember now, there’s a funny thing. If you set things up right, you don’t need degeneration, exactly…

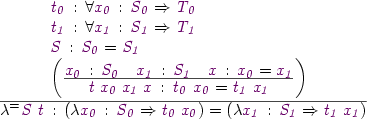

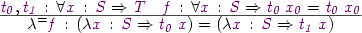

Imagine we have only let (the sooner we turn data into sugar for let, the better). You can devalue a let, replacing the top rhs by a shed. Now, key editing move: you can commute one let past another by turning

let f blah ; let g waffle

into

let g waffle ; let f blah

if waffle does not depend on f, or

let g waffle where f blah ; let f blah

otherwise. Degeneration is thus the repetition of this refactoring until the global f falls off the end!

(For data as it is now, you need to generate a bunch of lets for the type, its constructors and its various gadgets.)

In terms of what happens to typedness, we should try to ensure that devaluation cannot increase the amount of brown. Intuitively, removing information cannot increase the amount of stuff which is definitely wrong. Of course, we should expect yellow all over the shop.

I mention this largely because this is pretty fundamental stuff, in terms of the propagation of updates around the system. It’s rooted in the proof engine, below the elaboration layer, so the time to figure it out is now.

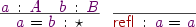

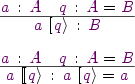

:

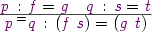

: